Overtime overruns alone threaten to siphon $1.05 billion from U.S. home- and community-care budgets this year, says Avalere Health—proof that shaky patient-caregiver matching is no longer just an operational headache but a bottom-line crisis.

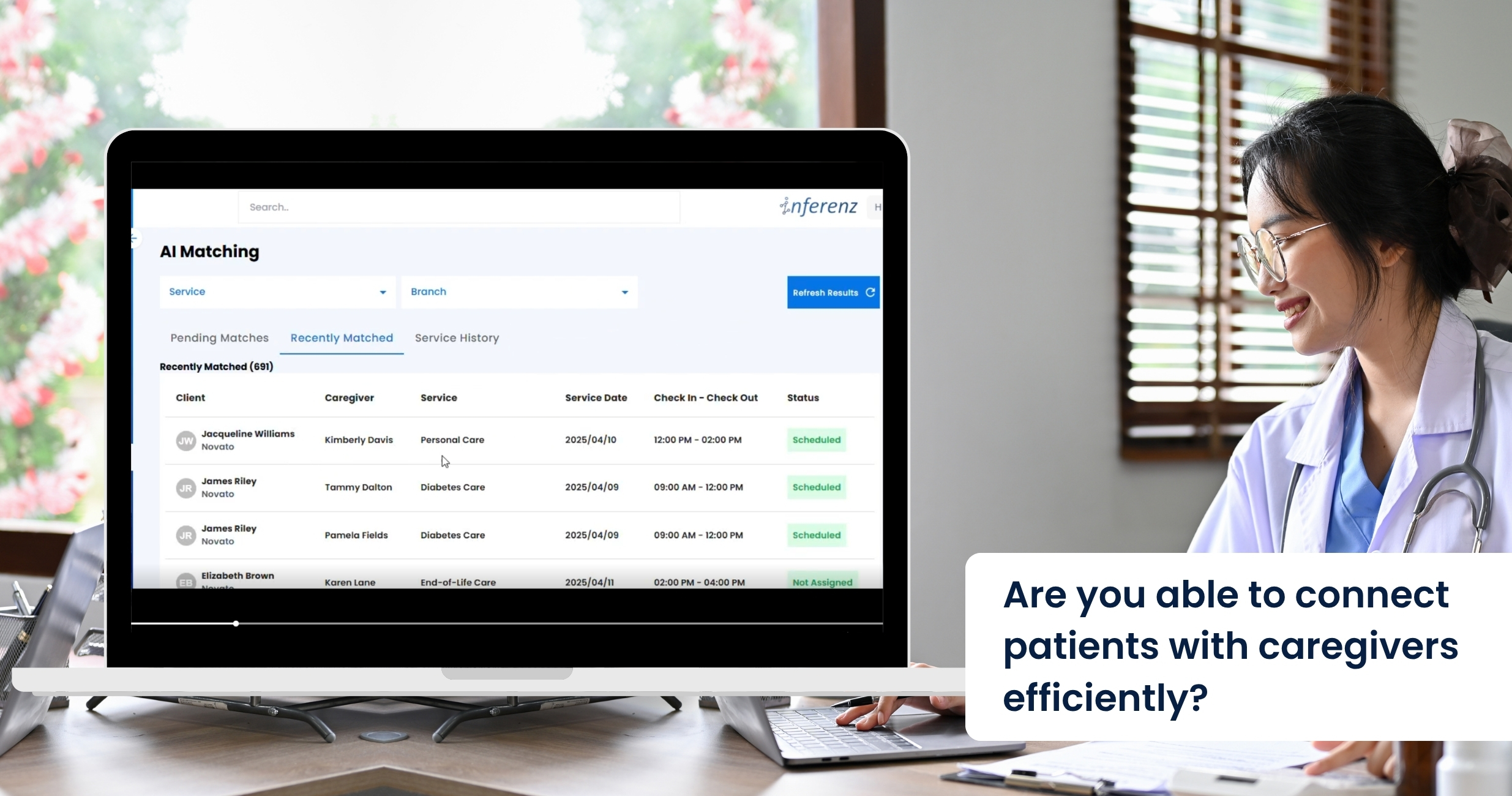

Schedulers still juggle phone calls, spreadsheets, and rule-based software that crumbles when a caregiver calls in sick. A comprehensive caregiver connect solution powered by agentic AI can flip that script. It watches every shift, learns from each match, and plugs gaps in minutes. No frantic dial-around, no client left waiting. Expect all efficiency with AI in healthcare.

Problems Faced by the Homecare Industry in Scheduling Appointments

Modern EMR and AMS platforms capture plenty of data, yet most still fail at turning that data into fast, smart schedules. When we ask schedulers and field staff where things fall apart, three patterns rise to the top.

Manual firefighting

A single caregiver call-out often touches four or five tools: phone, text thread, spreadsheet, agency software, and finally an “all-staff” blast message. In practice, the rescue takes two to four hours, during which the client risks a missed visit. Home care organizations call this weekend scramble “unsustainable” and link it to high office burnout.

Every unfilled hour can cost $25–$40 in lost billing. Late or missed wound-care checks raise hospital readmit odds by up to 15% (Loving Home Care study). Schedulers report after-hours stress as a top quit trigger; when one quits, five caregivers follow.

Data silos

Most schedulers never see real-time clinical flags. A recent report mentions that only about one in three U.S. home-care agencies have a point-of-care EHR that talks to their patient scheduling tool. The rest rely on notes or phone calls.

Result:

- A wound-care alert sits in the EHR while the AMS assigns a basic aide.

- Medication-change notices arrive hours after the caregiver has left.

- Coordinators must cross-check two or three systems before offering a shift, slowing coverage. And there is the issue of duplicate patient records that create more chaos and confusion in scheduling. Check out our AI data duplication solution here.

Without unified data, matches ignore skills that matter most—like current wound-vac certs, language fit, or post-surgery protocols.

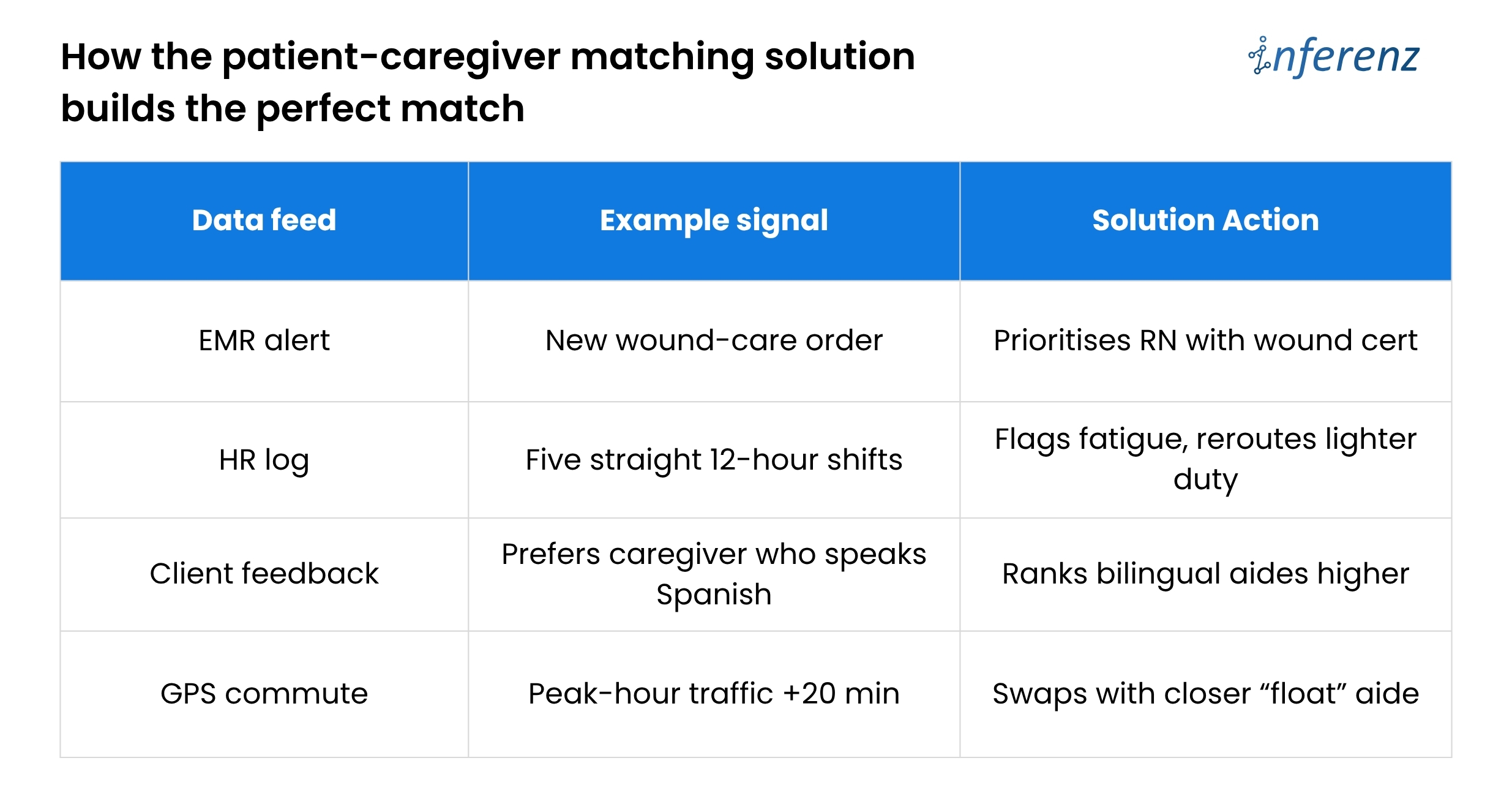

Burnout churn

Shift imbalance drives turnover faster than pay issues. The 2024 Activated Insights Benchmarking Report put caregiver turnover at 79%—the highest in six years. Schedulers themselves are leaving too; agencies that lose a scheduler often see a linked caregiver exodus.

Poor balance means good aides get overbooked, newer aides sit idle, and both groups start scanning job boards. Until schedules pull live clinical data, automate call-out recovery, and watch workload signals, agencies will keep paying for empty visits and exit interviews.

Poor balance means good aides get overbooked, newer aides sit idle, and both groups start scanning job boards. Until schedules pull live clinical data, automate call-out recovery, and watch workload signals, agencies will keep paying for empty visits and exit interviews.

The article now chalks out how predictive care in Patient Caregiver Matching solution fixes those three weak spots.

What Is Agentic AI?

Think of it as a digital care coordinator that can perceive, decide, and act without waiting for humans. Unlike first-gen AI healthcare companies that graft models onto old software, a true agentic layer:

- Learns from every shift – Outcome scores, travel times, and client feedback loop back into the model.

- Optimises on many goals at once – It balances continuity, cost, and worker well-being instead of chasing only fill rate.

- Acts in real time – If traffic halts Nurse Maya, the agent reroutes someone closer and messages all parties automatically.

The result is a living schedule that keeps adapting—no stale rule set, no bias from tired staff.

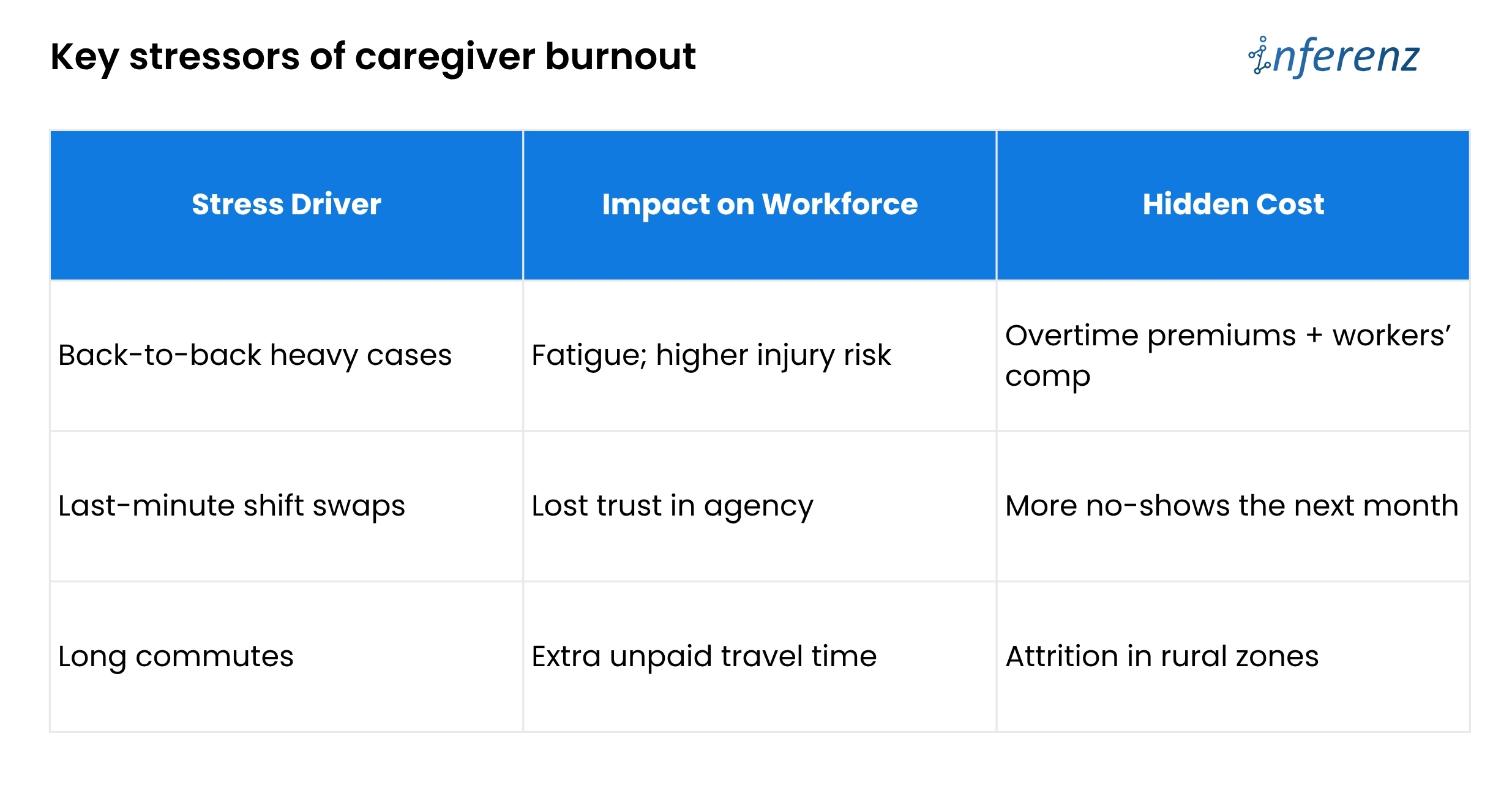

Traditional AMS vs. Agentic AI

Bottom line: Outdated tools act like a notebook. An AI engine acts like a live dispatcher.

Bottom line: Outdated tools act like a notebook. An AI engine acts like a live dispatcher.

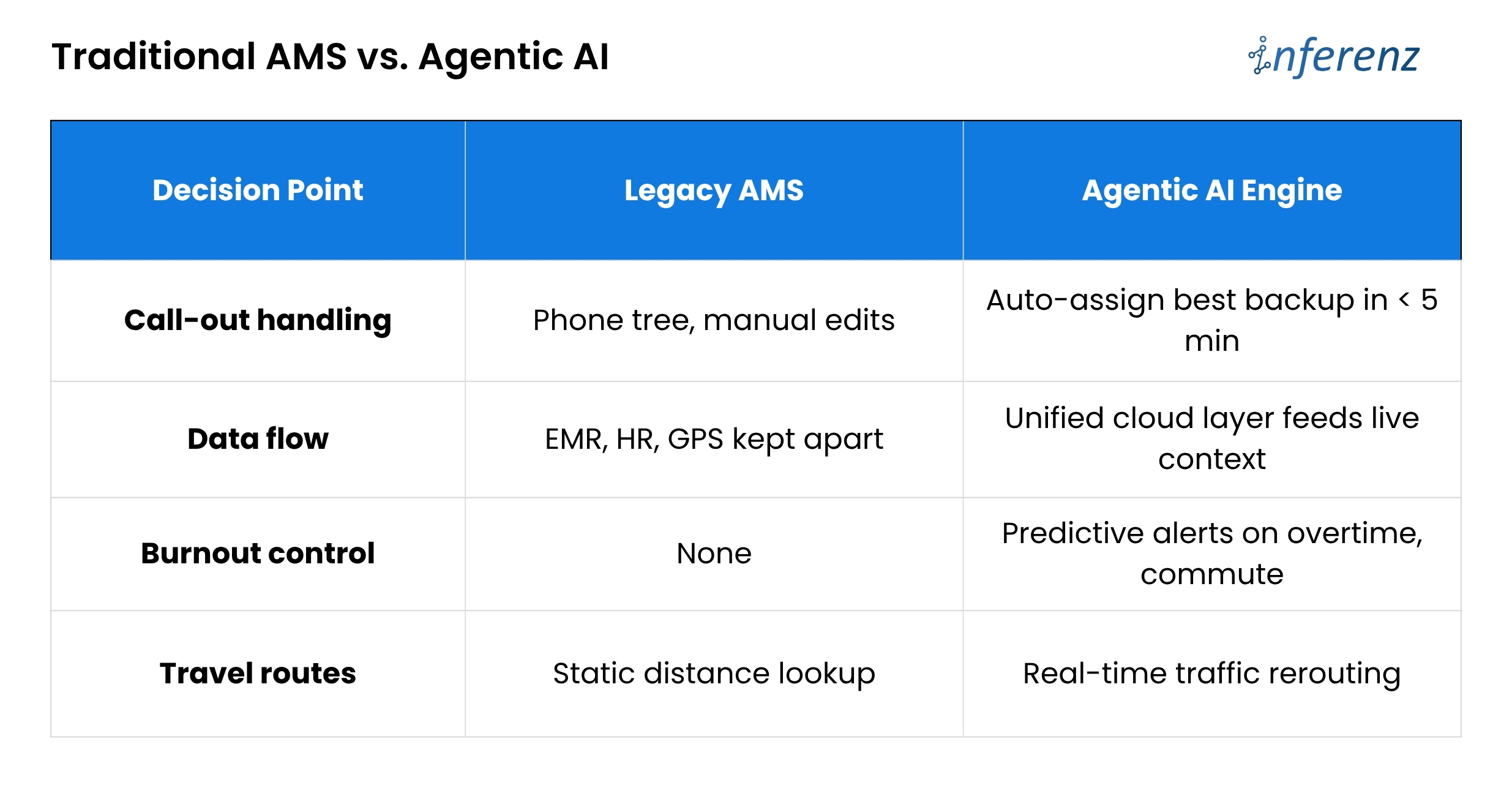

PCM: the heart of smart scheduling

Patient Caregiver Matching (PCM) sits at the core of the agentic engine. It blends hard data that includes skills, licenses, and shift history with soft cues like language, pet comfort, and even commute stress.

Each visit logged, each survey filled, feeds a feedback loop that sharpens the next match.

AI extracts relevant details and binds them in a single narrative. This narrative helps caregivers walk in fully prepared and better informed about their assigned patients.

How the patient-caregiver matching solution builds the perfect match

PCM makes thousands of micro-decisions that a human scheduler simply cannot track in real time.

Caregiver Connect and Smart Scheduling in Homecare – Three Phases

Phase 1 – Assist

- Role of AI:

The system watches current openings, checks skill, location, and past ratings, then lists the best caregiver for each visit. It also sends and tracks shift texts for you.

- Why it matters:

Speed is life in home care. By moving the “who is free and right for this client” search to an AI engine, booking time drops by half. A spot that once took twenty calls now locks in minutes.

- Effort change:

Coordinators still approve picks, yet their keyboard time falls about 50%. That freed hour can go to client follow-ups or staff coaching.

Phase 2 – Co-pilot

- Role of AI:

The tool no longer waits for you to act when a callout hits. It finds the next best caregiver, confirms the shift, and pushes a note into the EMR so nurses see the new name.

- Why it matters:

Missed visits tumble toward zero. Clients stay safe, and the agency avoids fines or angry phone calls on Friday night.

- Effort change:

Because the AI covers most last-minute gaps, schedulers work on harder tasks and see about 70% less day-to-day scramble.

Phase 3 – Autonomous

- Role of AI:

The agent drafts the full weekly rota, juggles swaps, and even alerts HR when future demand will outrun supply. It chats with caregivers to shift times if traffic or family issues pop up.

- Why it matters:

Fill rate climbs to 98% and holds steady. Fewer gaps mean higher revenue, better reviews, and calmer staff.

- Effort change:

Coordinators shift to oversight. They scan dashboards, spot edge cases, and mentor teams. Routine scheduling work is now background noise handled by the system.

During Phase 1, a coordinator still approves matches, building trust. By Phase 3, the agent posts a full weekly roster, flags any legal or pay exceptions for quick sign-off, and frees leaders to focus on quality and growth.

A CXO-level Path to Patient–Caregiver Matching that Actually Works

Home-care agencies lose time and money because scheduling lives in silos. Skills sit in one system, vitals in another, PTO in a third.

Home-care agencies lose time and money because scheduling lives in silos. Skills sit in one system, vitals in another, PTO in a third.

When a caregiver calls out, coordinators must sift through them all. The fix is a Patient Caregiver Matching (PCM) engine that learns and acts in real time—but only if leaders roll it out with equal focus on data, change control, and trust.

Here is how to move from today’s chaos to tomorrow’s self-tuning roster, without hiring a small army of project managers.

Start with clean data, not clever code.

Feed every AMS, EMR, and HR stream into one secure lake through FHIR or other HIPAA-ready APIs. A single source of truth stops double entry and lets the AI see the full picture: licenses, wound alerts, commute times, even overtime risk. Until that lake is live, smart matching cannot begin.

Prove value in a 90-day branch pilot.

Switch PCM on for one location and track three simple numbers: shift fill rate, overtime hours, and coordinator minutes per booking. A branch-level test gives hard evidence, keeps risk low, and shows frontline staff that the tool helps rather than replaces them.

Move to “co-pilot” across the agency.

Once the pilot hits its marks, let PCM auto-cover call-outs everywhere. Keep one senior scheduler in an “air-traffic control” role to handle edge cases and to reassure teams that humans still guide policy. The daily scramble fades; missed visits trend toward zero.

Let the AI look three months ahead.

With real-time cover in place, turn on the forecasting lens. PCM scans referral trends, PTO calendars, and skill gaps, then warns HR before shortages hit. Growth continues without surprise overtime or rushed hiring.

Build trust into every decision.

A live roster run by AI only sticks if people believe it is fair and safe.

Each match stores a plain-language reason such as “Carla assigned for dementia skill, four-mile commute.” Hard caps on weekly hours, license scope, and labor law live inside the rule set, so the engine cannot overstep. Monthly bias scans compare assignments across age, gender, and minority status while retraining drift triggers. The cloud zones keep scheduling live even if one data center fails.

The payoff

Weekend duty spreads evenly, time-off requests stick, and early fatigue signs rise to the surface before they become burnout. CXOs gain tighter control over cost and care quality without adding layers of back-office staff. Coordinators finally go home on time.

“Caregivers who gain more autonomy over their schedule report lower stress.” — Cleveland Clinic flexible scheduling study

Future-ready edge tapping the private caregiver pool

Growth pressures will not spare preferred home health care brands. The patient-caregiver matching solution can open an on-demand bench of vetted private caregivers when internal staff hit capacity. The agent weighs cost, compliance, and continuity, then fills gaps without overtime blowouts.

This “elastic staffing” positions agencies as connected care hubs, not just schedule brokers. It also sidesteps the narrow talent funnel that hammers many healthcare AI companies today.

FAQs about Patient Caregiver Matching solution by Inferenz

- What problem does Patient Caregiver Matching (PCM) solution fix in home-care scheduling?

It cuts the costly overtime overruns that pile up when staff call out or shifts go unfilled. By learning from every visit, it matches the right aide in minutes and keeps clients from missing care. - How does Predictive Care Matching feature choose the best caregiver?

The feature weighs skills, licenses, client feedback, travel time, and live clinical alerts, then ranks caregivers by overall fit in real time. - Will the system lower my agency’s overtime cost?

Yes. Agencies in pilot tests saw overtime hours fall because open shifts got covered early, not at the last second when rates spike. - Can it handle a call-out at 7 p.m. on Friday?

It can. The tool auto-selects the next best caregiver, sends the shift offer, and updates your EMR and AMS without manual intervention. - How does it guard against caregiver burnout churn?

The engine flags heavy caseloads, long commutes, and back-to-back double shifts, then spreads work more evenly to keep staff fresh. - What data feeds the matching engine?

It pulls live inputs from EMR, AMS, HR, and GPS tools, ending the data silos that slow most schedulers. - How soon will we see a higher shift fill rate?

Most branches notice smoother coverage inside 30 days, with clear fill-rate gains by the end of a 90-day pilot. - Does it plug into our current EMR and AMS?

Yes. A unified data layer links to standard FHIR or vendor APIs, so you keep your existing systems while adding smarter matching. - Is patient data secure and HIPAA-ready?

Data stays in an encrypted, cloud-based lake with strict access controls and full audit logs that meet HIPAA standards.